2D Rotations

In the last post we saw that we can use matrices to perform various kinds of transformations to points in space. We can stretch, flip, and scale them, but the important one for us is rotation. Robots don't suddenly scale their size up and down, and they certainly don't mirror themselves along an axis, but one thing they do quite frequently is rotate. Because of this, it's important that we have a solid mathematical understanding of the rotation of points in space.

Deriving the rotation matrix

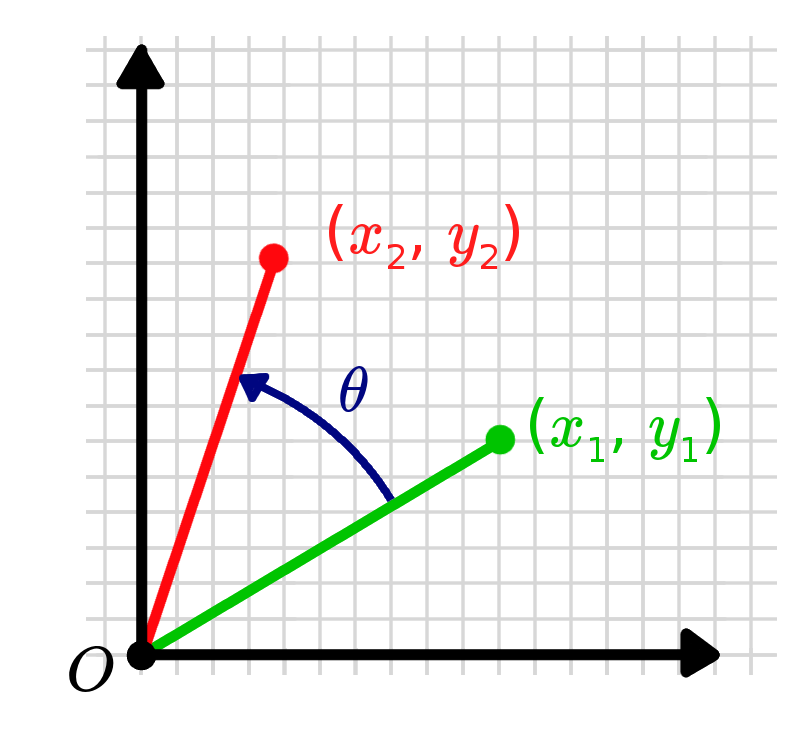

Say we have a point and we want to find the transformation matrix that will rotate it (anticlockwise) around the origin by an angle to a new point, .

In other words, we're looking for values to satisfy an equation that looks something like this:

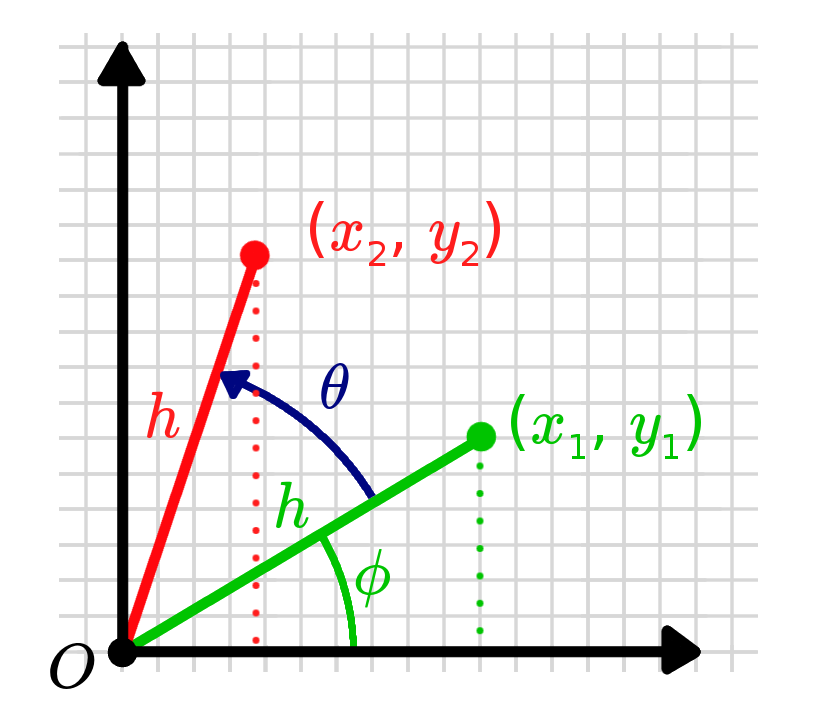

To tackle this, we'll start by adding some extra variables to make life easier. We'll add , the distance from the point to the origin (the hypotenuse of the triangle formed by , , and ), and , the angle the hypotenuse makes with the x-axis.

We can now express and in the following way:

We can express the new, rotated point similarly. It should be at the same distance from the origin, , but at a new angle, . Then, by using some trig identities, we can substitute our original points back in.

We can now pull out the coefficients and express this as a matrix multiplied by the first point:

And that's it! That matrix is the 2D rotation matrix. You can multiply it by any point (or series of points) to rotate them anticlockwise about the origin by the angle . If you'd like to see some examples, you can scroll to the bottom of the page. But first, we'll take a closer look at three important properties that a rotation matrix has.

Properties of rotation matrices

Rotation matrices have a few important properties that make them really useful. They might seem a bit trivial to begin with, but as we work with more complex equations and many matrices, they will save a lot of headaches!

Inverse = Transpose

The first property to be aware of is that the inverse of a rotation matrix is its transpose. Sometimes computing the inverse of a matrix can be quite a difficult (or even impossible) task, but with a rotation matrices it becomes very straightforward.

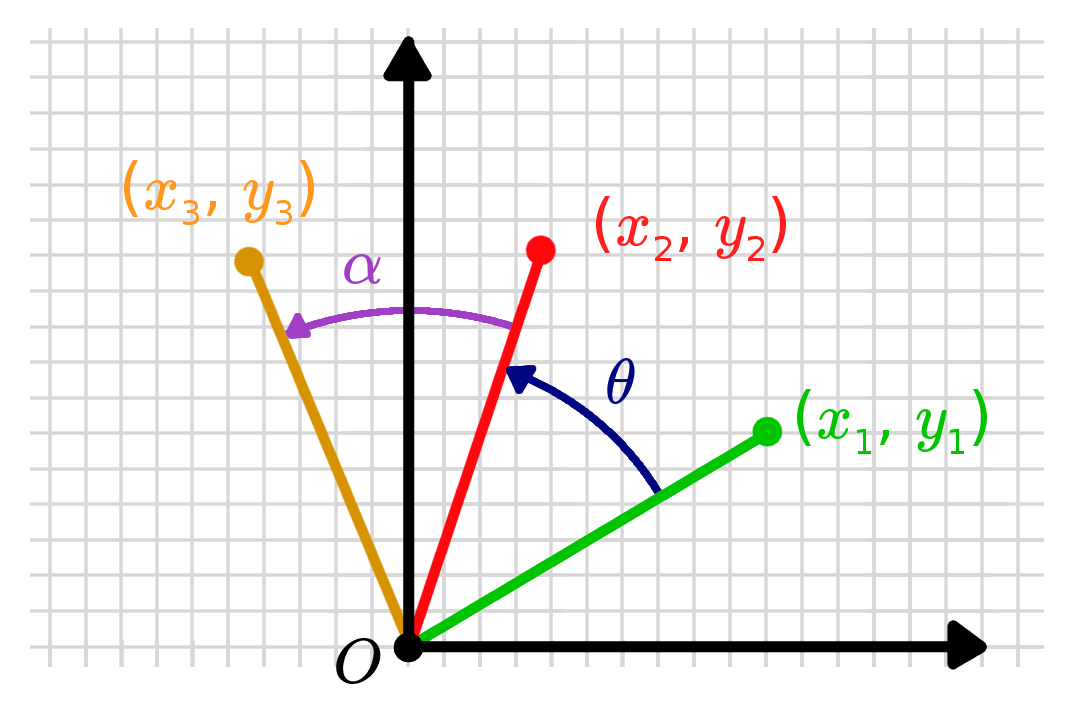

There's actually a pretty simple way to understand this relationship. Imagine that we have a point that we got by rotating a point by an angle . If we want to invert this transformation, that is the same as "un-rotating" the point, or equivalently to rotate it by an angle .

Using the fact that cosine function is even () and the sine function is odd (), the result can be proved quite easily.

Determinant = 1

The second property is that the determinant of all rotation matrices is equal to one, no matter what the value of is. Again, this is pretty straightforward to prove using the equation for the determinant of a 2D matrix and some standard trig identities.

x

Rotation X Rotation = Rotation

Rotation matrices have the property that if you multiple two of them together, you always get another rotation matrix. That is, you get another matrix that has the same properties as above and which would represent a different rotation in space (for the 2D case it will be the sum of the two angles of the original, but in 3D it will get more interesting).

Again, we can prove this fairly easily with trig identities.

Other Properties (and other names!)

Rotation matrices also have some other properties that we won't explore here. One thing worth noting is that the fancy mathematical name for the group of all rotation matrices is the "special orthogonal group" (for a particular dimension ). You might sometimes see it written that a matrix is in or - this simply means it is a rotation matrix in 2D or 3D respectively.

Where to next?

Now that we have the mathematics of 2D rotations down pat, we can start to work on 3D - an important topic since our robots are all operating in a 3D world (even if some calculations can be simplified to 2D). The other question we still haven't answered is how to translate our robot, since sitting in one location and spinning around in circles isn't usually very useful.

Examples

Geogebra

Loading

MATLAB/Octave

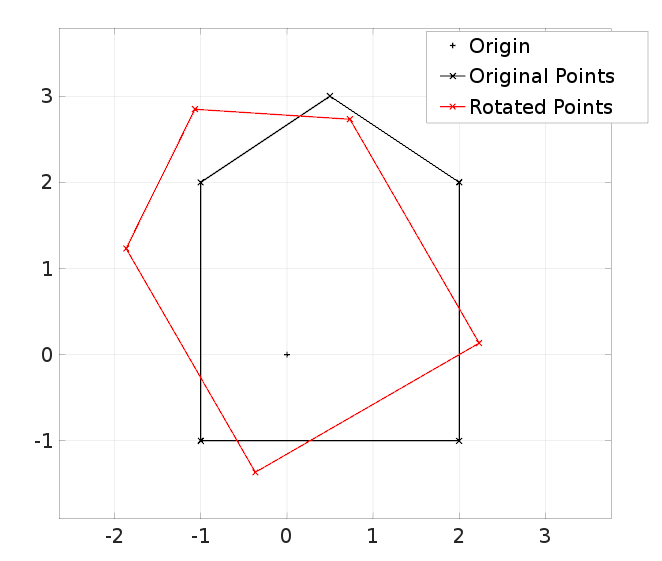

% Set up an array of points

x_points = [2, 2, 0.5, -1, -1, 2];

y_points = [-1, 2, 3, 2, -1, -1];

points = [x_points; y_points];

% Rotation matrix

theta = 30;

rot_mat = [cosd(theta), -sind(theta);

sind(theta), cosd(theta)];

% Rotate the points

for p = 1:size(points,2)

rot_pts(:,p) = rot_mat * points(:,p);

end

% Plots

clf;

plot(0,0,'+k', 'DisplayName', 'Origin');

hold on; grid on;

plot(points(1,:), points(2,:), 'x-k', 'DisplayName', 'Original Points');

plot(rot_pts(1,:), rot_pts(2,:), 'x-r', 'DisplayName', 'Rotated Points');

legend show; axis equal;

Extra Resources

- Wikipedia has an article covering some of the features of both 2D and 3D rotation matrices.